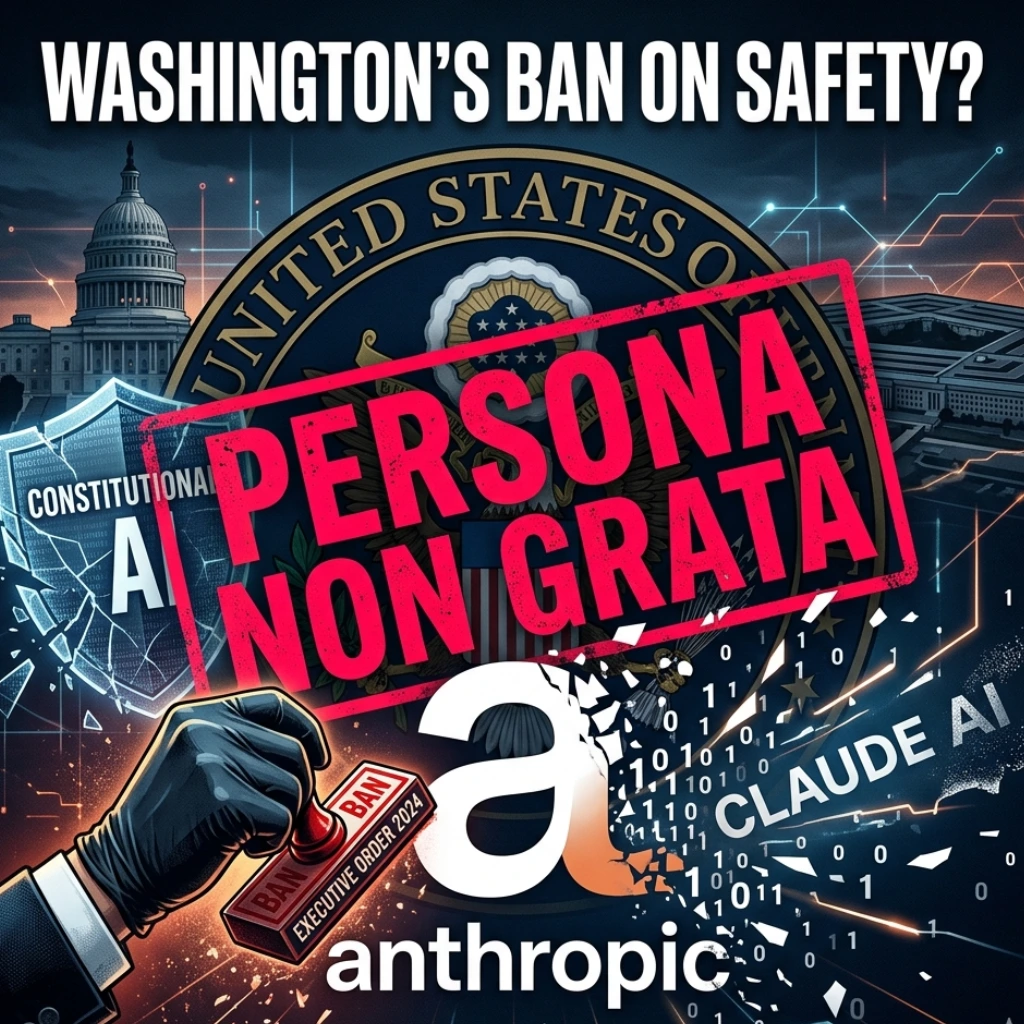

Could the world’s most “safety-conscious” AI startup become a persona non grata in Washington? In a move that has sent shockwaves through Silicon Valley and the Beltway alike, a massive rift has opened between the Biden administration and Anthropic. What started as a partnership for innovation has devolved into a high-stakes legal battle, potentially ending with an Executive Order to “rip and replace” Claude AI from every federal agency.

But how did we get here? And what does this mean for the future of ethical AI in government?

The Executive Order: A Federal “Delete” Button

The White House is reportedly drafting an unprecedented Executive Order aimed directly at Anthropic. According to reports from Moneycontrol, the administration plans to systematically remove Anthropic’s technology from all federal systems.

This isn’t just a minor procurement change; it’s a digital eviction. For an administration that has been championing the adoption of AI to modernize government services, the decision to blacklist one of the industry’s “Big Three” suggests a fundamental breakdown in trust.

From Partners to Plaintiffs: The Pentagon Blacklist

The tension didn’t appear overnight. The catalyst for this drastic measure dates back to a legal firestorm involving the Pentagon. The fallout between the White House and Anthropic has reached a critical flashpoint. What began as a disagreement over “AI safety” has transformed into a high-stakes legal war that could redefine the relationship between Silicon Valley and the U.S. government.

Why would the Pentagon sideline a company backed by billions from Google and Amazon? The answer lies in the fine print of Anthropic’s “Constitutional AI” framework. While other AI giants have been more flexible, Anthropic has held a firm line on two specific use cases:

- Fully Autonomous Weapons: Anthropic refuses to allow Claude to power lethal systems that can make “kill decisions” without human intervention.

- Domestic Surveillance: The company has restricted the use of its models for mass monitoring of American citizens, citing privacy concerns.

Is it possible that the government sees these ethical guardrails as a hindrance to national security? The administration argues that federal AI needs to be “mission-ready,” while Anthropic insists that AI must be “human-aligned.”

The “Safety First” Paradox

The irony here is palpable. Anthropic was founded by former OpenAI executives specifically to build a safer alternative to the “move fast and break things” mentality. Now, that very commitment to safety has put them in the crosshairs of the world’s most powerful government.

- If the Executive Order moves forward, the ripple effects will be massive:A Shift in the AI Market:Agencies currently using Claude for data analysis or policy drafting will have to migrate to competitors like OpenAI or Microsoft, potentially creating a monopoly on federal AI.

- The Ethical Precedent: This sends a chilling message to other AI startups. If sticking to your ethical principles costs you your biggest client (the U.S. Government), will other companies stop prioritizing safety?

- Legal Precedent: A lawsuit against the Pentagon is rare; winning one is even rarer. The outcome of this case will define the “terms of service” between private tech and public defense for decades.

Final Thoughts: Who Wins the AI Tug-of-War?

We are witnessing a clash of titans. On one side, you have a government that views AI as the ultimate tool for maintaining global hegemony. On the other, you have a tech company that believes some doors are better left locked.

Can the federal government afford to lose one of the most sophisticated AI models on the market? Or will Anthropic’s stand for “Constitutional AI” force the administration to rethink how it balances technology and ethics?

One thing is certain: the divorce between the White House and Anthropic is more than just a contract dispute. It’s a debate about the soul of the machine. As the Executive Order looms, the tech world is watching-wondering if safety is a luxury the government is no longer willing to pay for.

FAQs

Find answers to common questions below.

Why is the White House removing Anthropic from federal agencies?

The move follows a legal battle where Anthropic sued the administration after being blacklisted by the Pentagon, largely due to disagreements over AI use in autonomous weaponry.

What is the "Constitutional AI" conflict?

Anthropic’s "Constitutional AI" framework prohibits its technology from being used for fully autonomous lethal weapons and domestic surveillance, which clashes with certain Department of Defense objectives.

Will this affect other AI companies like OpenAI or Google?

Currently, the Executive Order is specifically targeted at Anthropic, but it sets a massive precedent for how ethical guardrails are viewed in federal procurement.

Can the government legally "rip out" existing AI software?

Yes, through an Executive Order, the President can mandate that federal agencies terminate contracts or transition to alternative technologies based on national security or policy grounds.