The days of “move fast and break things” in the world of Artificial Intelligence might be coming to a screeching halt. For the last year, the tech industry has felt like the Wild West-models were built, tested behind closed doors, and then unleashed onto the public to see what happens. But what if the next big LLM had to pass a government inspection before you could even type a prompt?

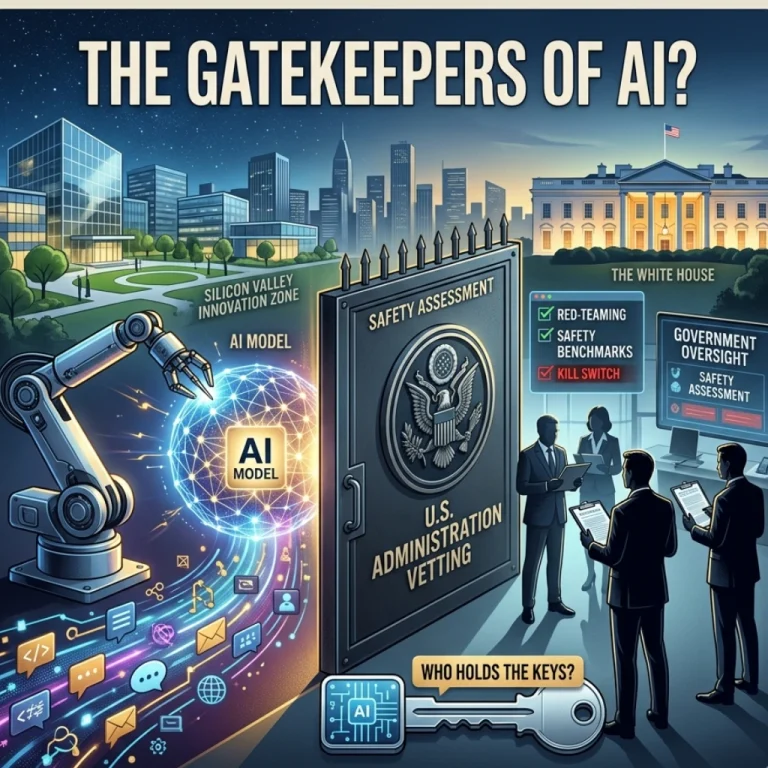

According to a recent report on White House mulls pre-release vetting of AI models, the U.S. administration is considering a massive policy shift. We’re talking about an executive order that could establish a dedicated working group of tech executives and government officials to vet new AI models before they ever see the light of day.

A Massive Reversal: From Hands-Off to Hands-On

For decades, the U.S. government has largely let Silicon Valley police itself. This “hands-off” approach is exactly how the internet grew into the giant it is today. But AI is a different beast entirely. Unlike a social media algorithm, a rogue AI model has the potential to impact national security, facilitate cyberwarfare, or spread misinformation at a scale we’ve never seen.

Senior officials have reportedly already begun briefing leaders at OpenAI, Google, and Anthropic. It’s a clear signal that the era of voluntary commitments is ending. But this raises a burning question: Can a government body actually keep pace with the lightning-fast development of neural networks?

What This Vetting Process Could Look Like

While the exact details are still being hammered out, the proposed working group aims to bridge the gap between private innovation and public safety. Here’s what we might see:

- Red-Teaming Requirements: Rigorous testing to see if a model can be “tricked” into creating bioweapons or executing high-level hacks.

- Safety Benchmarks: Standardized metrics that every model must meet before public deployment.

- The “Kill Switch” Conversation: Discussions on how to handle models that show signs of unpredictable or “emergent” dangerous behaviors.

- Tech-Government Collaboration: A revolving door of expertise where researchers from companies like Anthropic work alongside federal safety experts.

The Friction Between Innovation and Regulation

Is this a necessary safety net or a bureaucratic bottleneck? If you ask a developer, they’ll tell you that innovation thrives on speed. If a model has to sit in a government “waiting room” for six months, the U.S. risks falling behind international competitors who aren’t bound by the same red tape.

On the other hand, can we really afford to wait for a “catastrophic event” before we start regulating? We’ve seen how slowly the world reacted to social media’s impact on mental health and democracy. This time, the White House seems determined to get ahead of the curve. But does the government have the technical talent to vet a model that even its own creators don’t fully understand?

Why This Matters for You

This isn’t just a “tech industry problem.” It affects how you’ll use AI in your daily life. If vetting becomes too strict, we might see “sanitized” versions of AI that are less capable but safer. Conversely, it could lead to a higher level of trust in the tools we use for education, medicine, and business.

Key highlights of the shifting landscape:

- The shift from voluntary safety pledges to mandated oversight.

- Increased scrutiny on large-scale compute power and data privacy.

- A focus on preventing AI-generated deepfakes from disrupting democratic processes.

Final Thoughts

We are watching the blueprint of the future being drawn in real-time. The move toward a White House-led vetting group marks a turning point where AI stops being a “cool tech toy” and starts being treated like a nuclear asset or a new pharmaceutical drug.

Will this collaboration lead to a safer digital future, or will it simply move the “Wild West” of AI development to countries with fewer rules? Only time-and the next executive order-will tell. Are we ready for a world where the government holds the keys to the next big algorithm?

FAQs

Find answers to common questions below.

What exactly is "red-teaming" in the context of White House AI vetting?

It’s essentially a high-stakes stress test where experts try to "break" the AI or trick it into providing dangerous information (like chemical formulas or hacking code) to ensure it's safe for the public.

Will this make AI tools like ChatGPT slower or less capable?

There is a trade-off. While vetting ensures safety, strict regulations could lead to "over-alignment," where AI becomes more hesitant to answer complex questions to avoid violating safety protocols.

Could this move give an advantage to countries like China?

This is the billion-dollar question. Critics argue that while the U.S. vets its models, international competitors might race ahead without restrictions, potentially winning the global AI arms race.

Who will actually sit on this vetting board?

The proposal suggests a mix of high-level tech executives from companies like Anthropic and Google, alongside federal national security officials and independent AI safety researchers.