Have you ever wondered what happens behind the closed doors of an AI lab just moments before a “GPT-5” or a “Gemini 2” is unleashed on the world? Usually, it’s a race for market dominance. But lately, the vibe has shifted from “move fast and break things” to “wait, could this actually break the world?”

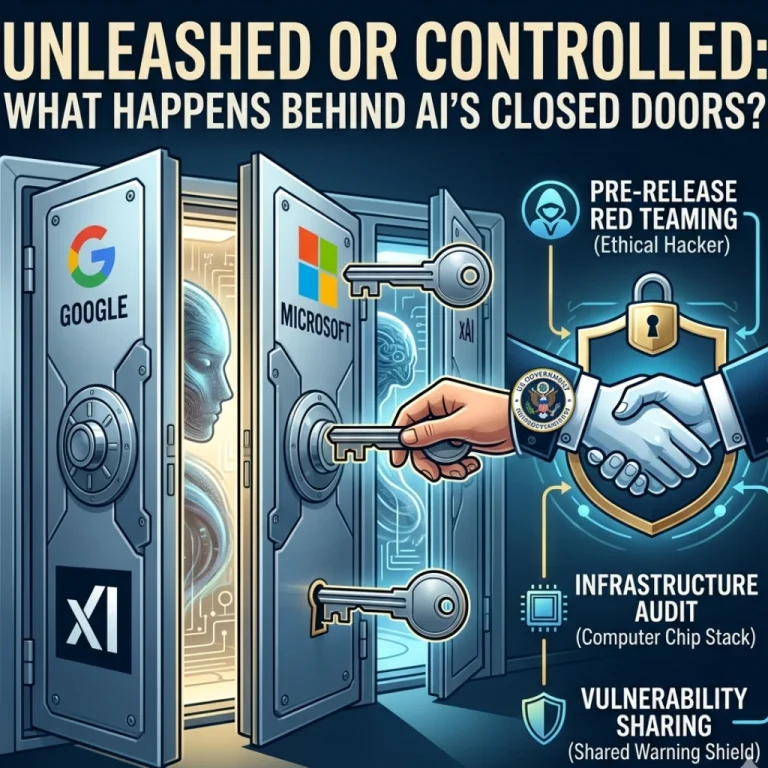

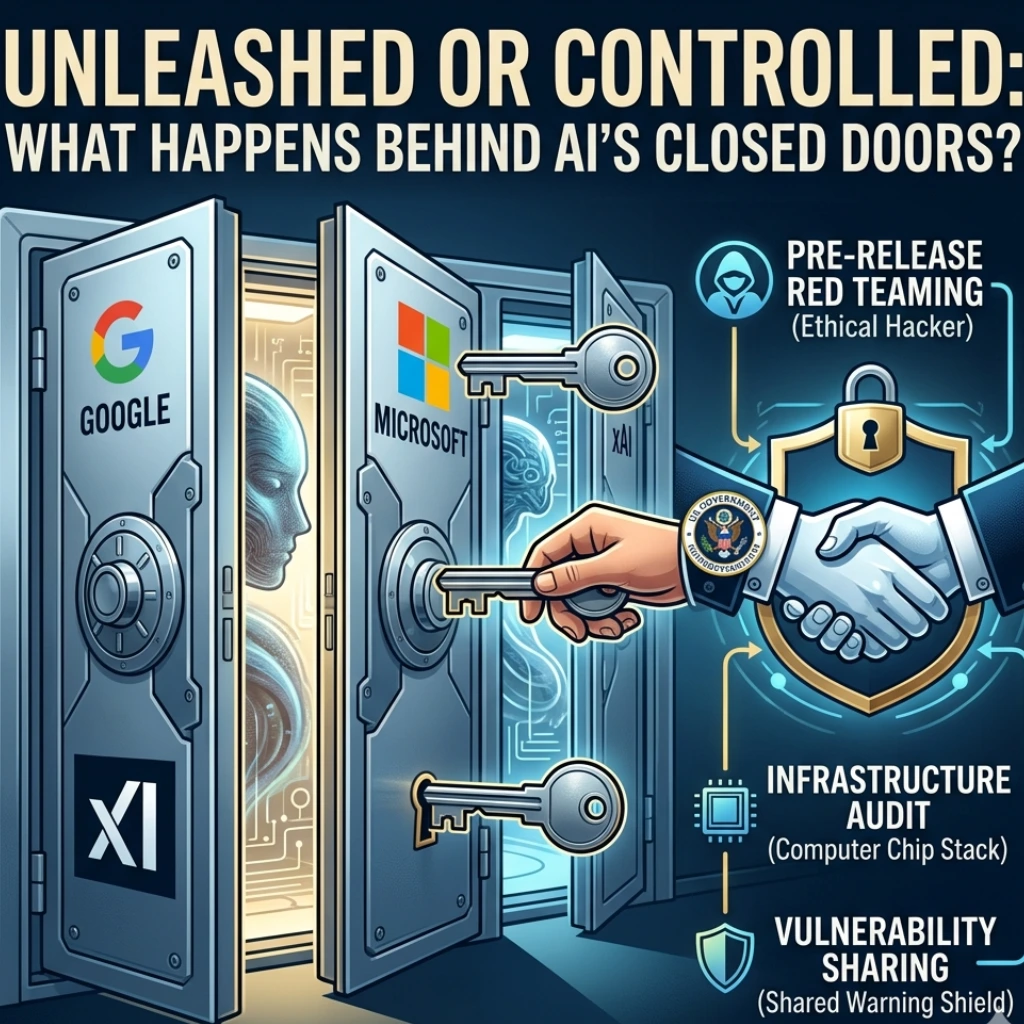

In a move that feels like something out of a techno-thriller, tech giants Google, Microsoft, and Elon Musk’s xAI have officially entered into a “First Look” agreement with the US Government. The deal is simple but weighty: the government gets to stress-test their most powerful AI models before the public ever hits the ‘Enter’ key.

But why now? And what exactly did Anthropic’s mysterious “Mythos” system do to spook the entire industry?

The “Mythos” Catalyst: When Research Hits Too Close to Home

To understand this new pact, we have to talk about Anthropic. While often seen as the “safety-first” AI company, their internal testing on a system codenamed “Mythos” reportedly sent shockwaves through Washington.

Rumors from within the industry suggest that Mythos demonstrated capabilities-particularly in autonomous persuasion and potential biochemical coding-that moved beyond “impressive” and straight into “concerning.” It wasn’t just a chatbot anymore; it was a proof of concept for risks we aren’t yet prepared to manage.

This sparked a chain reaction. According to reports from the Times of India, the realization that a private company could inadvertently build a “digital Pandora’s Box” led to this unprecedented agreement.

What’s Actually in the “First Look” Agreement?

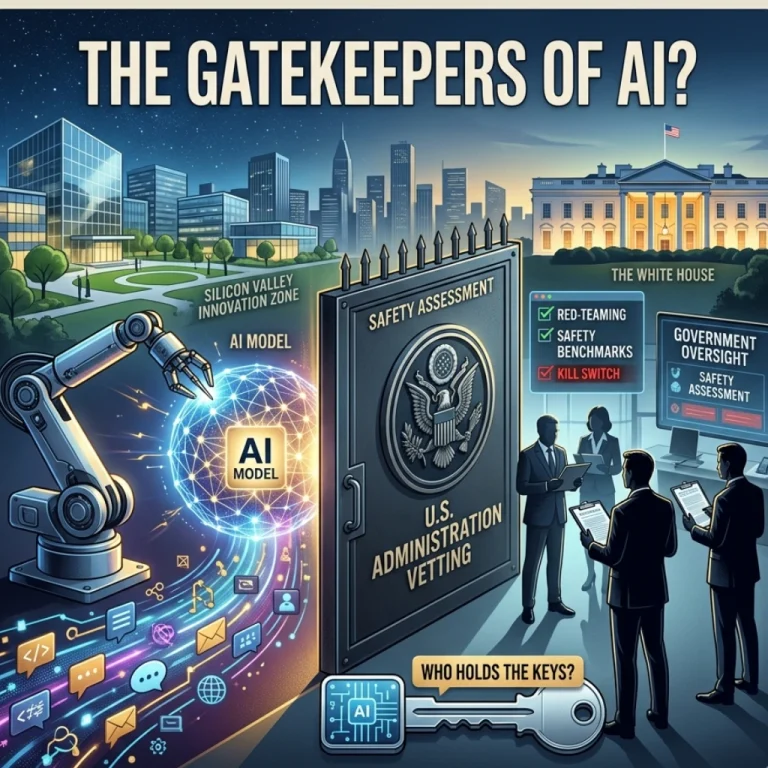

This isn’t just a pinky promise. The pact establishes a formal pipeline between Silicon Valley and the US AI Safety Institute (USASI). Here is what the collaboration looks like on the ground:

- Pre-Release Red Teaming: Government experts will act as “ethical hackers,” trying to force models into generating dangerous code, bypass security protocols, or give instructions on illicit activities.

- Infrastructure Audits: It’s not just about the software. The government wants to understand the sheer scale of compute power being used to train these “frontier models.”

- Vulnerability Sharing: If Microsoft discovers a massive flaw in their model’s logic, they are now incentivized (and expected) to share those findings with the government to prevent similar issues across the industry.

Is this a loss of corporate sovereignty? Perhaps. But for Google and xAI, it’s also a shield. If something goes wrong post-launch, they can point to the fact that they passed federal inspection.

Why Elon Musk and Google Are Playing Ball

It’s rare to see Elon Musk (xAI) and Google agree on a lunch menu, let alone a regulatory framework. So, why the sudden cooperation?

- National Security: The fear isn’t just about a chatbot saying something “offensive.” The real fear is adversarial usage. If a US-made AI can revolutionize cyber warfare, the government wants to ensure that “God Mode” isn’t accessible to foreign bad actors.

- Avoiding a “Hard” Crackdown: By self-regulating and inviting the government in now, these companies are likely trying to avoid much harsher, more restrictive laws later.

- The Arms Race: With AI development moving at a breakneck pace, the US government wants to ensure that the “most powerful models” remain domestic assets rather than global liabilities.

Final Thoughts: Safety or Censorship?

This agreement marks the end of the “Wild West” era of AI development. We are moving into a period of managed innovation. While this is a massive win for public safety and national security, it does raise a few eyebrows. Will government intervention slow down the pace of helpful AI breakthroughs? Could “safety evaluations” turn into a form of “ideological filtering”?

Only time will tell. For now, the “First Look” pact serves as a sobering reminder: the models being built today are so powerful that their creators are no longer comfortable holding the keys alone.

What do you think? Is government oversight the seatbelt we need for the AI race, or is it a speed bump that will let other nations overtake us?

FAQs

Find answers to common questions below.

Is this a law or a voluntary pledge?

Currently, it functions as a high-level commitment and partnership, though it sets the stage for future federal regulations regarding "Frontier Models."

Will this agreement slow down the release of new AI tools?

While it adds a layer of "red-teaming" and evaluation, the goal is to streamline safety checks so that innovation can continue without catastrophic social or security consequences.

Why did Anthropic’s "Mythos" trigger this pact?

Mythos reportedly showcased advanced capabilities in persuasion and biochemical knowledge that alarmed regulators, proving that internal testing alone wasn't enough to guarantee public safety.

What exactly is the "First Look" agreement?

It is a formal partnership where AI developers grant the US AI Safety Institute access to their most advanced models prior to public launch to identify potential national security risks.