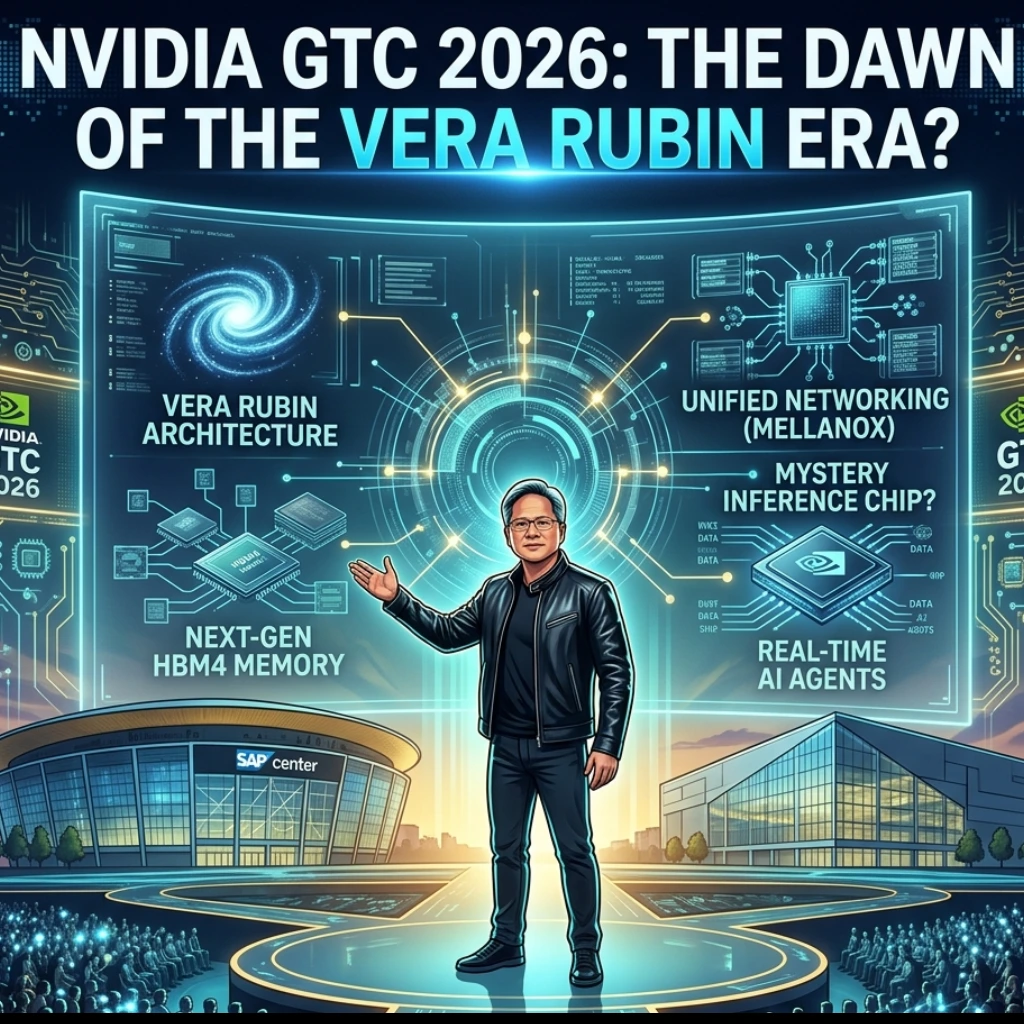

The tech world doesn’t just watch NVIDIA anymore; it waits for it to set the pace of the global economy. As we approach March 16, 2026, all eyes are fixed on San Jose. Why? Because Jensen Huang is stepping onto the stage for the NVIDIA GTC 2026 keynote, and the rumors swirling around this event are more than just hype-they represent a fundamental shift in how artificial intelligence will function.

We’ve moved past the “can AI do this?” phase and entered the “how fast can it scale?” era. With the rumored “Vera Rubin” architecture on the horizon, we might be looking at the most significant hardware leap since the launch of the H100.

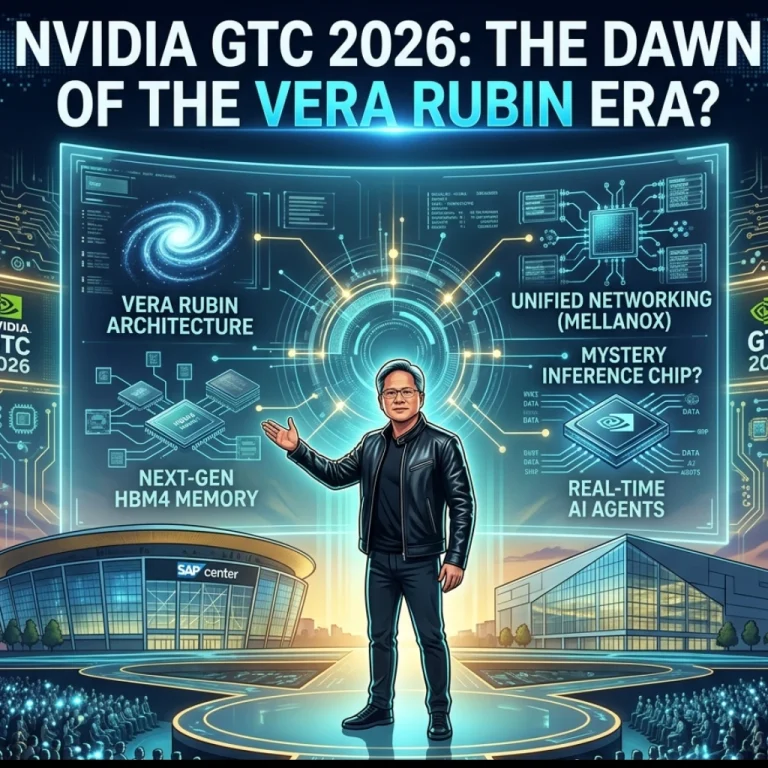

Beyond Blackwell: Why the Vera Rubin Architecture Matters

For the last year, the Blackwell chips have been the gold standard. But in the world of silicon, standing still is the same as moving backward. Named after the pioneering astronomer who provided evidence for dark matter, the Vera Rubin architecture is expected to focus on high-bandwidth memory and unprecedented efficiency.

But what does this mean for the average enterprise?

- Next-Gen HBM4 Memory: Industry insiders suggest Rubin will be the first to fully utilize HBM4 technology, drastically reducing the “memory wall” that currently slows down complex LLMs.

- Unified Networking: Expect deeper integration with Mellanox technologies to ensure that data flows between GPUs as fast as it’s processed.

Is it possible that Rubin will make current AI clusters look like calculators? If the early specs are any indication, the leap in TFLOPS (Teraflops) performance could be double digits.

The Pivot to Inference: A New Mystery Chip?

Training a model is expensive, but running it is where the real costs live. Currently, NVIDIA dominates the training market, but the competition is heating up in the inference space. The biggest “buzz” heading into San Jose is a rumored inference-specific chip designed to tackle the high costs of deploying AI at scale.

For a long time, the industry used the same heavy-duty chips for both training and inference. But as AI moves into our phones, cars, and every edge device imaginable, we need something leaner and meaner. A dedicated NVIDIA Inference GPU could:

- Slash Latency: Making real-time AI agents feel truly “human” and instantaneous.

- Lower Power Consumption: Helping data centers meet increasingly strict ESG and energy requirements.

- Optimize “Small” Models: Providing a cost-effective way to run specialized SLMs (Small Language Models) that don’t need the raw power of a Blackwell B200.

What’s in the “Full AI Stack” Reveal?

Jensen Huang rarely talks about just “chips” anymore. He talks about the “AI Factory.” Reports suggest that NVIDIA GTC 2026 will feature a “Full AI Stack” reveal.

This isn’t just hardware; it’s the CUDA software layer, the Omniverse digital twins, and the NIMs (NVIDIA Inference Microservices) working in perfect harmony. By controlling the entire stack, NVIDIA makes it almost impossible for developers to leave their ecosystem. It’s a “walled garden” that happens to be the most productive place on earth for an AI engineer.

Final Thoughts: The Road to San Jose

As we count down to the 9 a.m. PT session on March 16, the question isn’t whether NVIDIA will impress us, but by how much. Will the Vera Rubin architecture be the final piece of the puzzle for AGI? Or will the new inference chip be the catalyst that finally makes AI profitable for the masses?

One thing is certain: NVIDIA isn’t just a chip company anymore-they are the architects of the intelligence age. Stay tuned, because the San Jose keynote is about to rewrite the playbook.

FAQs

Find answers to common questions below.

Why is the new architecture named "Vera Rubin"?

NVIDIA loves honoring scientific pioneers. Vera Rubin was the astronomer who confirmed the existence of dark matter. By naming the 2026 architecture after her, NVIDIA signals that this chip is designed to handle the "invisible" and massive data scales of future AGI.

How does an "inference-specific" chip differ from a regular GPU?

Think of a regular GPU like a heavy-duty freight train (great for moving massive amounts of training data). An inference chip is more like a high-speed electric delivery van-it’s optimized for quick, low-energy "answers" rather than heavy-duty "learning."

Will GTC 2026 make the Blackwell B200 obsolete?

Not exactly. In the enterprise world, "old" chips usually move to secondary tasks while the flagship (Rubin) takes on the most demanding frontier models. Your Blackwell clusters are still gold, but Rubin is the new platinum.

Can I attend the Jensen Huang keynote virtually?

Historically, NVIDIA streams the GTC keynote globally. While the San Jose floor will be packed with developers, the "Full AI Stack" reveal is usually a digital event for the masses.