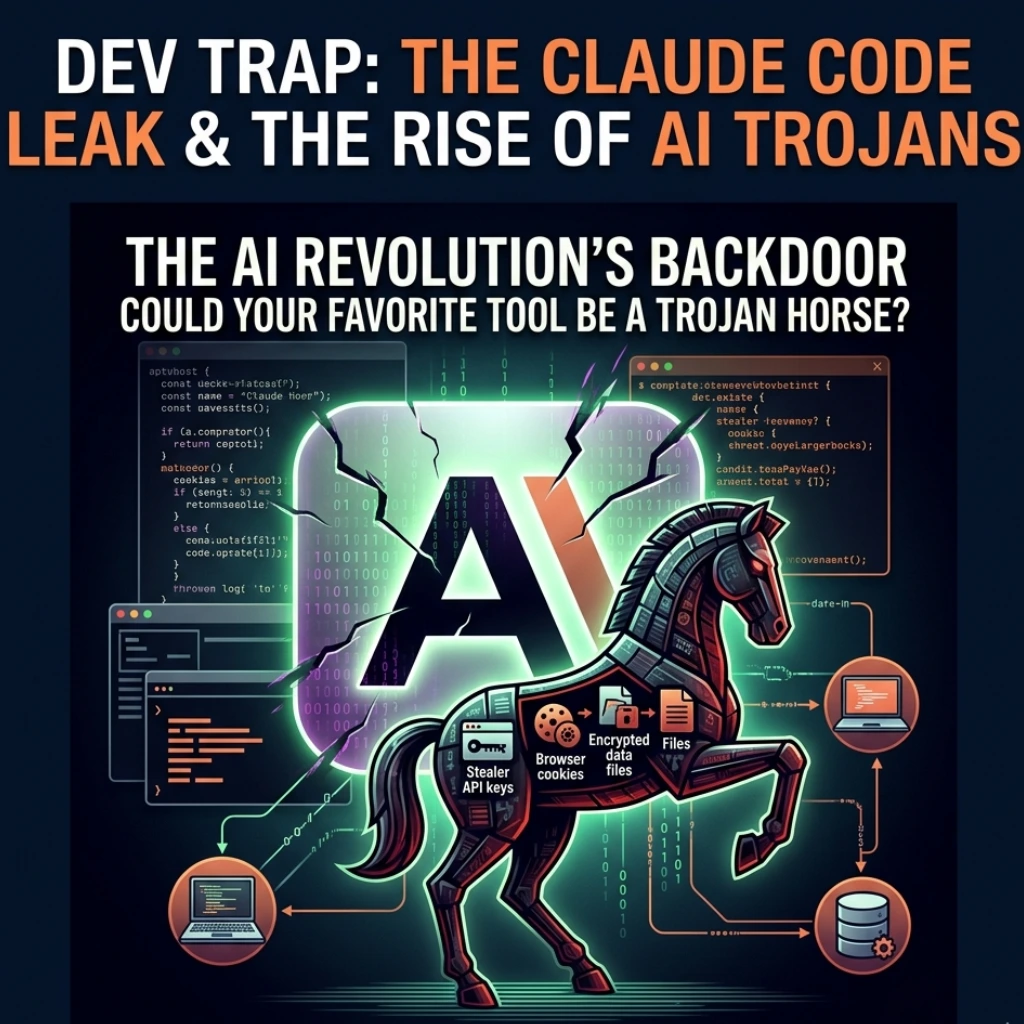

The tech world recently hit a massive speed bump, and if you’re a developer or a security enthusiast, you need to pay attention. We’ve seen data breaches and software vulnerabilities before, but the latest crisis involving a Claude Code leak being weaponized with malware marks a shift in the landscape.

Could your favorite productivity tool actually be a Trojan horse? That’s the question echoing through the halls of cybersecurity firms this week.

The Breach: What Actually Happened?

The situation escalated quickly when reports surfaced that internal source code and proprietary tools related to Claude Code-Anthropic’s interface for developers-were leaked online. While a leak is bad enough for intellectual property, the real danger emerged when threat actors got their hands on it.

Hackers didn’t just dump the code; they weaponized it. By embedding malicious scripts into the leaked files and re-distributing them across developer forums and “mirrored” repositories, they’ve created a security nightmare. As reported by TechBuzz, this isn’t just a corporate headache; it’s a direct threat to anyone trying to get an early look at “leaked” AI features.

Why “Leaked” Tools Are a Cybersecurity Trap

Why are developers falling for this? It’s the “shiny object” syndrome. We all want the latest AI capabilities before they’re officially rolled out. But here’s how the trap works:

- The Lure: Scammers post “unofficial” versions of Claude Code on GitHub or Telegram, promising unrestricted access or “pro” features for free.

- The Payload: Once downloaded, the software executes stealer malware designed to grab API keys, browser cookies, and crypto wallet private keys.

- The Persistence: Because these tools require deep integration into your terminal or IDE, the malware often gains high-level permissions, making it incredibly hard to scrub from your system.

Have you ever stopped to think if that “experimental” tool you just cloned is actually looking at your .env files? In this case, that’s exactly what’s happening.

The Evolution of AI-Driven Attacks

This crisis highlights a broader, more alarming trend. We are moving past the era of simple phishing emails and entering the age of AI supply chain attacks.

Hackers are now using the brand authority of AI giants like Anthropic and OpenAI to mask their intent. By leveraging the Claude Code leak weaponized with malware, they aren’t just attacking a company; they are poisoning the very tools that developers trust to build the future.

What makes this specific attack so potent? It targets the technical elite. These aren’t people who click on “You’ve won an iPhone” ads. These are engineers who understand code, yet the malware is sophisticated enough-likely obfuscated using AI itself-to bypass standard signature-based antivirus software.

How to Protect Your Workflow

If you’re working in the AI space, you can’t afford to be complacent. Here is how you can stay safe:

- Stick to Official Channels: Only download CLI tools and SDKs directly from the official Anthropic console or verified NPM/GitHub accounts.

- Audit Your Permissions: If an AI tool asks for full disk access or permission to read your shell history, ask yourself: Does it really need this?

- Use Sandboxing: Run experimental AI tools in a Docker container or a dedicated VM to isolate your primary machine from potential leaks.

Final Thoughts: A New Era of Vigilance

The Claude Code security crisis is a wake-up call. As AI continues to integrate into every line of code we write, the surface area for attacks grows exponentially. We’re no longer just protecting our passwords; we’re protecting the integrity of the machines that help us think.

Is the convenience of a leaked tool worth the risk of a total system compromise? Probably not. The best way to move forward is with curiosity, yes, but also with a healthy dose of skepticism. Stay updated, stay cynical, and keep your API keys locked down tight.

FAQs

Find answers to common questions below.

How did the Claude Code leak become a malware threat?

The crisis began when internal source code was leaked online. Hackers quickly injected malicious scripts into these files and redistributed them as "unofficial" or "cracked" versions of the tool, tricking developers into installing stealer malware.

Can my antivirus detect the weaponized Claude Code malware?

Not always. Because this malware is often obfuscated or embedded within complex developer tools, it can bypass traditional signature-based antivirus software. It is designed to act silently, targeting .env files and browser cookies.

What should I do if I downloaded an unofficial version of Claude Code?

Immediately disconnect from the internet, rotate all your API keys (Anthropic, OpenAI, AWS, etc.), clear your browser sessions, and perform a fresh OS install if possible to ensure no persistent backdoors remain.