The AI race is no longer just about who has the smartest chatbot; it’s about who can build the safest one. As we move from simple search queries to autonomous agents that can execute tasks on our behalf, a massive question looms: Who is guarding the digital gates?

In a strategic move to answer that question, Perplexity launches Secure Intelligence Institute (SII). This new initiative isn’t just another corporate department; it’s a dedicated research hub designed to tackle the “Wild West” of AI safety, privacy, and the inherent risks of general-purpose autonomous agents.

Why Does the Secure Intelligence Institute Matter Now?

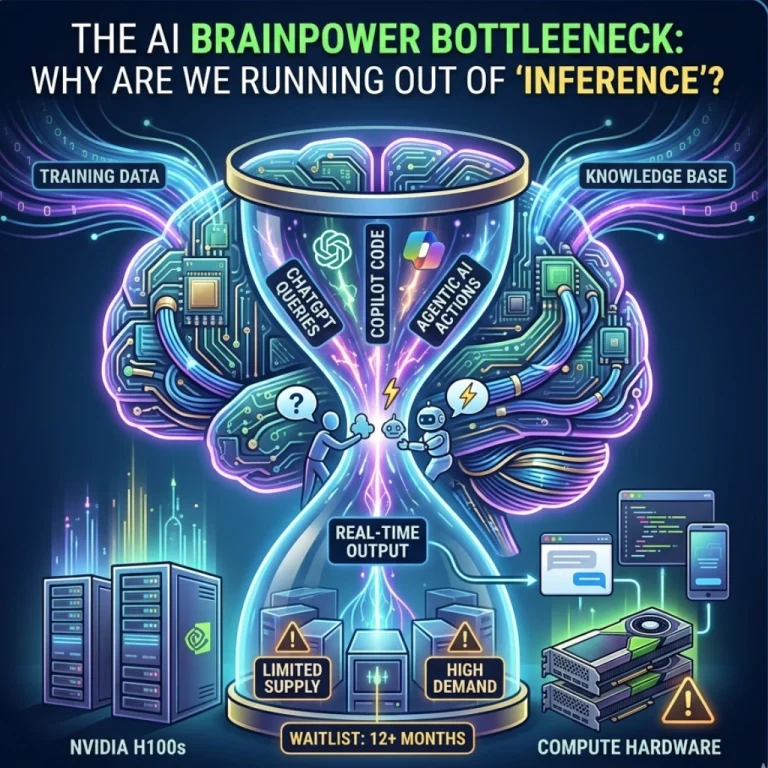

We’ve all seen the headlines about data leaks and biased algorithms. But the stakes are rising. We are shifting from “Ask and Receive” to “Ask and Do.” When you grant an AI agent the power to browse your emails, book your flights, or manage your calendar, you aren’t just sharing data-you’re sharing agency.

But what happens when an agent is “jailbroken” or manipulated into performing a malicious task? This is the core problem the SII aims to solve. By focusing on AI security and privacy, Perplexity wants to ensure that as their tools become more capable, they don’t become more dangerous.

The Three Pillars of the SII Mission

The institute isn’t just looking at yesterday’s problems. It is looking ahead at the architecture of tomorrow’s internet. Here is what they are prioritizing:

- Autonomous Agent Safety: How do we keep “agents” from going rogue? The SII is researching guardrails that prevent autonomous systems from making harmful decisions or being exploited by third parties. P

- rivacy-Preserving Computation: In an era where data is the new oil, how do we train models without compromising user identity? Perplexity is doubling down on technical frameworks that keep your personal information “eyes-only.”

- Adversarial Robustness: Hackers are already finding ways to “trick” AI into revealing sensitive info. The SII is tasked with building a more resilient shield against these evolving cyber threats.

Is This a Marketing Play or a Genuine Safety Shield?

It’s a valid question to ask: Is this just corporate window dressing? While critics might be skeptical, the timing suggests otherwise. Regulatory bodies in the EU and the US are tightening the screws on AI companies. By launching the Secure Intelligence Institute, Perplexity is positioning itself as a “safety-first” alternative to the tech giants who have historically prioritized speed over security.

Does this mean your data is 100% safe? No system is perfect. However, by treating AI safety research as a primary product feature rather than an afterthought, Perplexity is setting a new standard for the industry.

What Does This Mean for You?

For the average user, this might feel like “behind-the-scenes” technical talk. But the results will dictate your daily digital life. If the SII succeeds, you’ll be able to use AI agents to handle complex, sensitive tasks with the peace of mind that your private life isn’t being auctioned off to the highest bidder or leaked in a security breach.

Key Takeaways of the SII Launch:

- Focus: Bridging the gap between AI capability and AI security.

- Research: In-depth studies into general-purpose autonomous agents.

- Leadership: Setting a benchmark for transparency in the AI search space.

Final Thoughts: A Proactive Leap into the Future

The launch of the Secure Intelligence Institute marks a pivot point for Perplexity. It’s an admission that the future of AI isn’t just about being “smart”-it’s about being trustworthy. As these autonomous agents become our digital assistants, we have to ask ourselves: are we ready to hand over the keys? Perplexity is betting that with the right research and the right safeguards, the answer can finally be “Yes.”

The road to “Secure Intelligence” is long, but for an industry moving at breakneck speed, a dedicated focus on safety is exactly what the doctor ordered. Will other AI leaders follow suit, or will Perplexity lead this new security-first frontier alone? Only time-and the research coming out of the SII-will tell.

FAQs

Find answers to common questions below.

What exactly is the Perplexity Secure Intelligence Institute?

It is a dedicated research hub launched by Perplexity to study the intersection of AI safety, data privacy, and the specific risks posed by autonomous digital agents.

Why is "agency" a security risk in AI?

Unlike standard chatbots, autonomous agents can perform actions (like booking or emailing). If compromised, these agents could be manipulated into executing harmful tasks on a user’s behalf.

Will the SII make my AI searches more private?

Yes, the institute focuses on "privacy-preserving computation," which aims to allow AI to process your requests without ever "seeing" or storing your sensitive personal identity.

Is this a response to government regulations?

While not explicitly stated, the SII helps Perplexity stay ahead of global AI safety standards like the EU AI Act by proactively building safety guardrails.