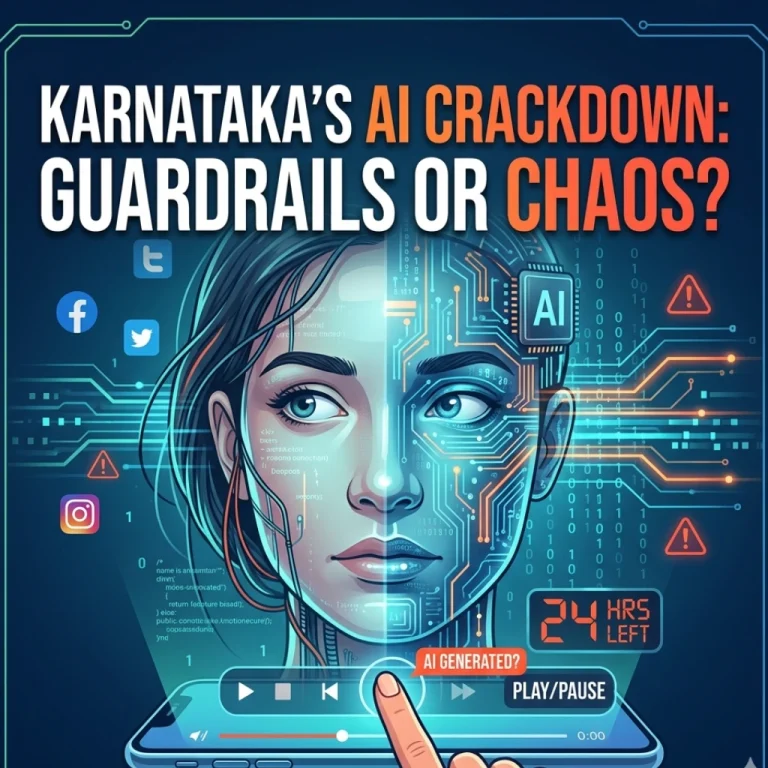

Have you ever scrolled through your feed and paused, wondering if that viral video of a politician or celebrity was actually real? In an era where AI can mimic a human voice or face with terrifying precision, that “pause” is becoming a daily ritual. Karnataka is now stepping in to turn that hesitation into regulation.

The state government has introduced a groundbreaking draft bill aimed at cleaning up the digital wild west. From mandatory AI labeling to aggressive takedown windows, Karnataka is positioning itself as a pioneer in digital policing. But will these strict rules protect citizens, or will they create a logistical nightmare for platforms?

The 48-Hour Countdown: Speed is the New Standard

One of the most striking features of Karnataka’s proposed bill to tackle misinformation and deepfakes is the sense of urgency it imposes. If the bill passes, social media intermediaries won’t have the luxury of “reviewing” harmful content for days on end.

- 24 to 48 Hours: This is the window platforms get to remove content flagged as harmful, misinformation, or online harassment.

- The Logic: Viral lies travel faster than the truth. By the time a traditional legal notice is processed, the damage-be it a riot or a ruined reputation-is often already done.

But here is the kicker: how do platforms distinguish between a parody and a malicious deepfake in under 24 hours? It’s a high-stakes race that will require tech giants to overhaul their moderation algorithms specifically for the Indian context.

Labeling the Machines: No More “Hidden” AI

We’ve all seen the “AI-generated” tags starting to pop up on platforms like Instagram and YouTube. However, Karnataka wants to move this from a “best practice” to a legal mandate.

Under the draft law, any content generated using Generative AI or deepfake technology must carry a clear label. The goal is simple: transparency. If a machine made it, the human viewing it deserves to know. This moves the burden of proof from the user to the creator and the platform.

Is this the end of the “unfiltered” internet? Perhaps. But in a state like Karnataka-home to India’s Silicon Valley, Bengaluru-the irony isn’t lost on anyone. The very city building the world’s AI is now the first to demand a leash for it.

Curbing Online Harassment and Misinformation

Beyond the flashy tech of deepfakes, the bill takes a hard swing at the persistent plague of online harassment. Karnataka has seen a rise in cyber-bullying and the weaponization of personal data.

The bill proposes:

- Strict Accountability: Platforms can no longer hide behind “safe harbor” status if they fail to act on reports of harassment.

- Localized Redressal: A focus on ensuring that grievances from Karnataka’s citizens are addressed with local linguistic and cultural context in mind.

Why does this matter now? With major elections and social shifts always on the horizon, the spread of coordinated misinformation can destabilize public order. Karnataka’s move suggests that self-regulation by tech companies has officially failed.

Final Thoughts: A Blueprint for the Rest of India?

Karnataka isn’t just writing a state law; it’s drafting a blueprint. As the Union government continues to work on the Digital India Act, Karnataka’s proactive stance could force the hand of federal regulators.

Can a single state really dictate how global tech giants operate? It’s a bold gamble. While the intent-protecting digital dignity-is noble, the execution will be the real test. Will we see a safer internet, or will the fear of heavy penalties lead to over-censorship?

One thing is certain: the days of “post first, ask questions later” are coming to an abrupt end in Karnataka. The digital world is getting some much-needed guardrails.

FAQs

Find answers to common questions below.

Will my AI-generated art be illegal under Karnataka’s new law?

Not illegal, but it will likely need a "Made by AI" badge. The law focuses on transparency so users aren't misled by hyper-realistic synthetic media.

What happens if a platform misses the 48-hour removal deadline?

The draft proposes strict penalties, which could include heavy fines or the loss of "safe harbor" protection, making the platform legally liable for the content.

Can this law actually stop the spread of viral misinformation?

While no law is a silver bullet, the 24-48 hour window aims to "break the chain" of virality before a deepfake can cause real-world harm.

Does this bill affect private WhatsApp messages?

The bill primarily targets "intermediaries" and public platforms, though the balance between encryption and tracking misinformation remains a heated part of the debate.