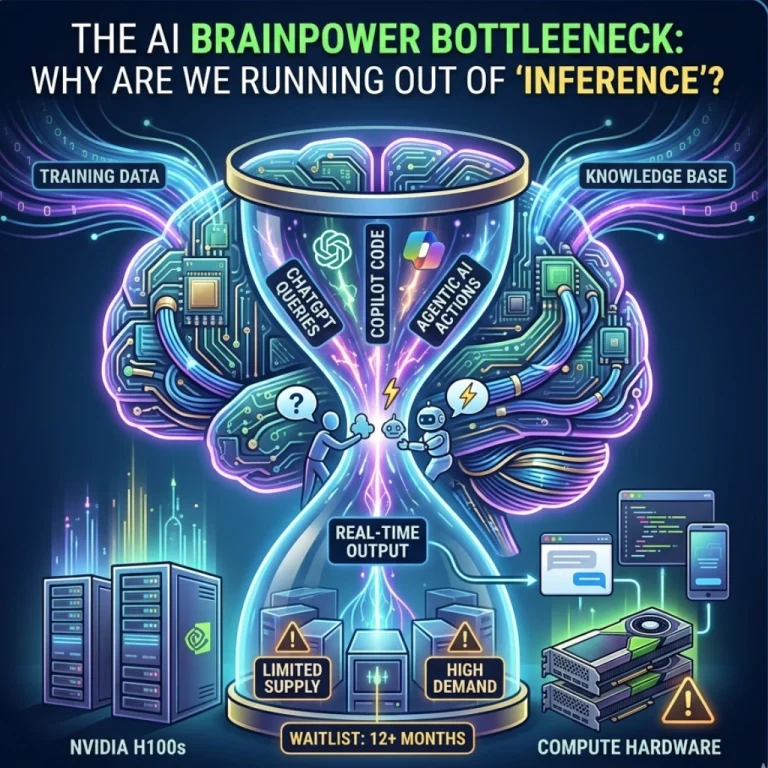

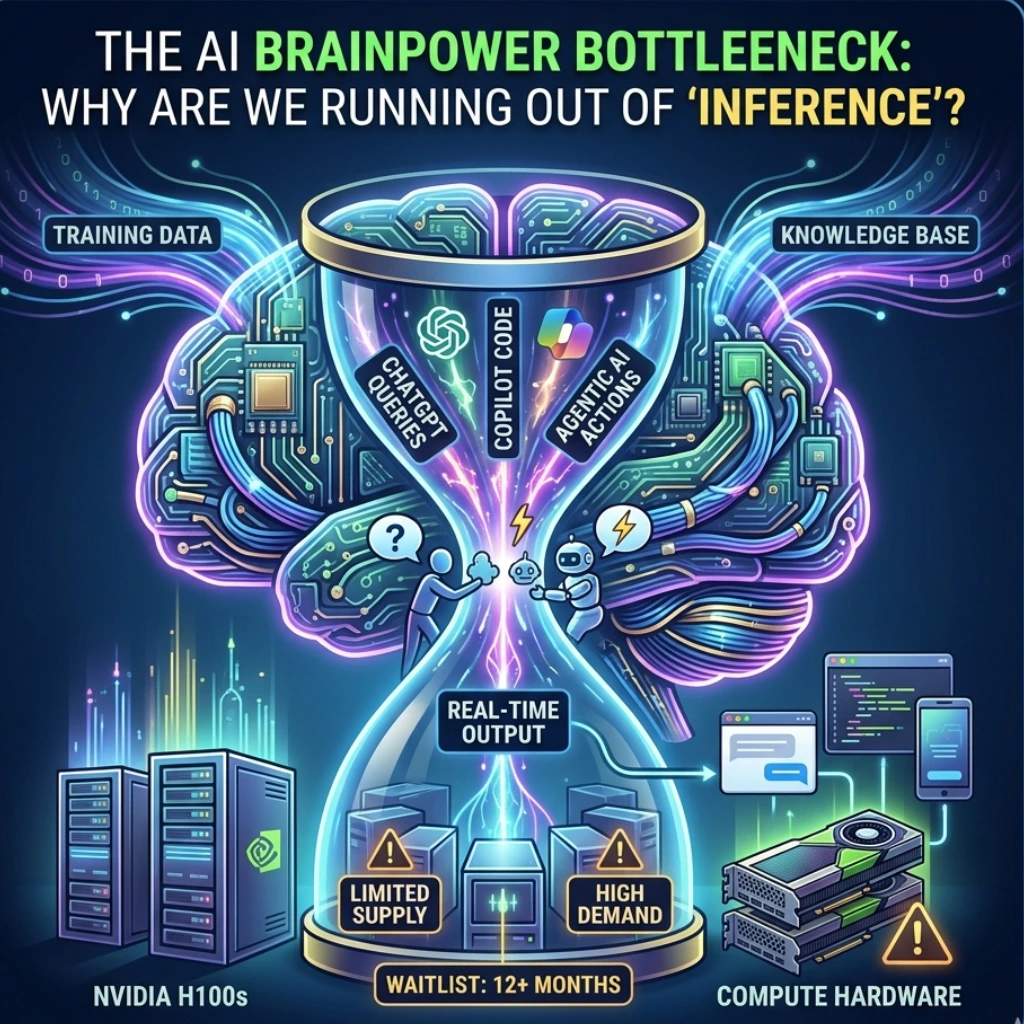

Remember when the biggest hurdle in AI was just building the damn thing? For the last two years, the tech world has been obsessed with “training”-the massive, month-long process of feeding data into LLMs. But according to Microsoft AI CEO Mustafa Suleyman, the goalposts just moved.

The era of training-dominance is cooling off, and a new bottleneck has arrived: Inference compute.

In a recent industry-shaking statement, Suleyman noted that for the next couple of years, the entire AI industry will be defined by the scarcity of inference. But what does that actually mean for the average business or developer? Let’s break down why the “brain power” required to run AI in real-time is becoming the most valuable resource on earth.

What is Inference, and Why are We Running Out of It?

To put it simply: Training is like a student studying for a PhD. Inference is that student actually answering questions in the real world.

Every time you ask ChatGPT for a recipe or use a Copilot to write code, you are consuming “inference compute.” As millions of users move from “experimenting” with AI to “integrating” it into their daily workflows, the demand for these real-time calculations has exploded.

We’ve reached a tipping point. It’s no longer about how smart the model is; it’s about whether you have the hardware available to let the model speak.

The One-Year Wait: The GPU Crisis Evolves

If you thought getting your hands on a PS5 at launch was hard, try ordering a thousand Nvidia H100s.

Reports suggest that GPU lead times have now stretched to nearly a year. Imagine being a startup with a brilliant AI idea, only to be told you can’t actually run your service until 2026 because the “engines” are backordered.

This scarcity is creating a massive divide in the tech landscape:

- The “Compute Rich”: Tech giants like Microsoft, Google, and Meta who hoarded chips early.

- The “Compute Poor”: Innovators and mid-sized firms forced to optimize every single token to stay afloat.

Is the industry ready for a world where your growth is capped not by your code, but by your hardware queue?

Why “Real-Time” is the New Battleground

The shift toward inference is driven by the rise of Agentic AI. We are moving away from simple chatbots toward autonomous agents that “think” before they speak (like OpenAI’s o1 model).

These models use “test-time compute,” meaning they spend more time processing during the inference phase to deliver a better answer. This is great for accuracy, but it’s a nightmare for hardware availability.

- Higher Latency: More complex reasoning requires more “brain cycles.”

- Cost Spikes: Running high-level reasoning models is significantly more expensive than standard LLMs.

- Energy Constraints: Data centers are hitting power grid limits trying to keep up with the heat generated by these chips.

Final Thoughts: Efficiency is the New Innovation

Mustafa Suleyman’s warning is a wake-up call. If inference is the bottleneck for the next 2-3 years, the winners won’t just be the ones with the biggest models. The winners will be those who master AI efficiency.

We are likely to see a massive surge in “Small Language Models” (SLMs) and specialized hardware designed specifically for inference rather than training.

The question is no longer “Can AI do this?” It’s “Can we afford the compute to let it try?” For now, the industry is holding its breath, waiting for the silicon to catch up with the ambition.

FAQs

Find answers to common questions below.

Why is inference compute suddenly more important than training?

Because we've moved from "building" models to "using" them. As millions of people hit 'Enter' on prompts simultaneously, the hardware required to deliver those instant answers is being stretched to its absolute limit.

How do 12-month GPU lead times affect AI startups?

It creates a "hardware moat." Smaller companies may struggle to scale their products in real-time, potentially forcing them to rely on the cloud infrastructure of tech giants like Microsoft or Google.

Can software optimization solve the compute bottleneck?

To an extent. Developers are now focusing on "quantization" (making models smaller) and "Small Language Models" (SLMs) to squeeze more performance out of the limited hardware available.

What is "test-time compute" and why does it drain resources?

It’s the AI "thinking" longer before it answers. While this makes the AI much smarter at math and logic, it consumes significantly more electricity and chip power per question asked.